The release of Gemma 4 marks a significant milestone in the evolution of lightweight large language models. While earlier versions of Gemma established a reputation for efficiency and accessibility, Gemma 4 introduces meaningful architectural, performance, and usability upgrades. These improvements are not merely incremental—they reflect a strategic shift toward higher reliability, enhanced multimodal capability, and stronger real-world deployment readiness.

TL;DR: Gemma 4 delivers substantial performance gains over previous versions, with improved reasoning, better context handling, stronger multimodal support, and enhanced efficiency. It reduces hallucinations, improves fine-tuning flexibility, and enables more practical enterprise deployment. These advancements matter because they make smaller, open models more competitive with larger proprietary systems. For developers and organizations, Gemma 4 represents a more capable and reliable foundation for AI-powered applications.

To understand why Gemma 4 matters, it is important to examine how it differs from earlier iterations and what those differences mean in practical terms.

Architectural Improvements: A More Refined Foundation

Previous Gemma versions focused on compact model efficiency, enabling strong performance within constrained hardware environments. Gemma 4 builds on that philosophy but refines the architecture for greater depth and stability.

Key architectural enhancements include:

- Optimized transformer layers for better token efficiency

- Improved attention mechanisms to reduce context fragmentation

- Refined training datasets with higher quality filtering

- Better parameter scaling balance between speed and reasoning depth

The most important change is not simply an increase in size or complexity. Instead, Gemma 4 makes smarter use of parameters, improving reasoning performance without proportionally increasing computational overhead. That balance is critical for developers who need deployable AI systems that do not require massive infrastructure.

This architectural refinement reduces training instability and improves output consistency. Earlier versions occasionally struggled with long-form coherence. Gemma 4 demonstrates noticeably stronger structural integrity in multi-paragraph responses and complex analytical prompts.

Context Window Expansion and Memory Handling

One of the clearest functional upgrades in Gemma 4 is improved context handling. Previous versions were effective for short-to-medium interactions but could lose nuance in extended sessions. Gemma 4 extends its workable context window and refines internal memory representation.

Why this matters:

- Longer documents can be processed in a single pass

- Conversations remain coherent over extended sessions

- Fewer contradictions appear in complex reasoning tasks

- Summarization performance improves significantly

For enterprise users analyzing contracts, reports, or technical documentation, extended context capability directly translates into greater productivity. The model can reason across larger bodies of information without fragmenting insights.

Enhanced Reasoning and Reduced Hallucination

One of the most persistent criticisms of earlier language models—including previous Gemma releases—was occasional hallucination: producing confident but incorrect information. While no model fully eliminates this risk, Gemma 4 demonstrates measurable improvements.

The model achieves this through:

- Stronger factual alignment training

- Improved reinforcement learning tuning

- Higher-quality evaluation benchmarks

- Better calibration of uncertainty responses

Instead of fabricating answers when uncertain, Gemma 4 is more likely to signal ambiguity. This subtle change makes the model more trustworthy in research, legal drafting, and technical documentation contexts.

Compared to earlier versions, logical chain reasoning—such as step-by-step problem solving—also shows improved depth. Mathematical explanations and structured analyses demonstrate fewer skipped steps and clearer progression.

Multimodal Capabilities: A Broader Scope

Earlier iterations of Gemma focused primarily on text processing. Gemma 4 expands into richer multimodal capability, allowing integration with visual inputs and broader cross-modal processing.

This expansion enables:

- Image-based question answering

- Document layout interpretation

- Cross-referencing text with visual context

- Enhanced caption generation

Multimodal systems matter because modern data is not purely textual. Business workflows regularly include PDFs, scanned documents, design files, dashboards, and charts. By supporting multimodal reasoning, Gemma 4 moves closer to practical workplace integration.

Efficiency and Deployment Flexibility

One of Gemma’s foundational strengths has always been efficiency. Gemma 4 preserves that advantage while improving runtime optimization.

Efficiency improvements include:

- Better inference speed per token

- Reduced memory footprint under optimized configurations

- Improved quantization support

- Stronger compatibility with edge deployments

For developers deploying AI in production, this means:

- Lower operational cost

- Greater scalability

- Improved responsiveness in user-facing applications

- Feasibility on mid-range hardware

The ability to run efficiently without sacrificing quality distinguishes Gemma 4 from larger, more resource-intensive models. Organizations that require privacy-focused on-device processing particularly benefit from these optimizations.

Fine-Tuning and Customization Improvements

Customization is essential for serious deployment. Earlier versions of Gemma allowed fine-tuning, but integration could require substantial experimentation to achieve stable results. Gemma 4 refines this process.

Key advances include:

- Improved parameter-efficient fine-tuning techniques

- Better adaptation to domain-specific corpora

- Smoother reinforcement tuning workflows

- Reduced overfitting tendencies

This makes Gemma 4 more attractive to:

- Healthcare technology developers

- Financial services platforms

- Legal AI solution providers

- Educational software companies

Customization no longer requires extensive retraining cycles to produce acceptable performance. Instead, targeted adaptation can achieve high domain alignment with less computational strain.

Performance Comparison: Gemma 4 vs Previous Versions

The following comparison highlights the most meaningful differences:

| Feature | Gemma 2 | Gemma 3 | Gemma 4 |

|---|---|---|---|

| Context Window | Moderate | Expanded | Significantly Expanded |

| Reasoning Depth | Good | Improved | Advanced Structured Reasoning |

| Hallucination Control | Basic Mitigation | Enhanced Filtering | Calibrated Uncertainty Response |

| Multimodal Support | Limited or None | Preliminary | Robust Integrated Capability |

| Fine-Tuning Efficiency | Moderate | Improved | Highly Streamlined |

| Deployment Flexibility | Efficient | More Optimized | Enterprise-Ready Optimization |

This comparison makes clear that Gemma 4 is not merely incremental. The model reflects maturity rather than simple expansion.

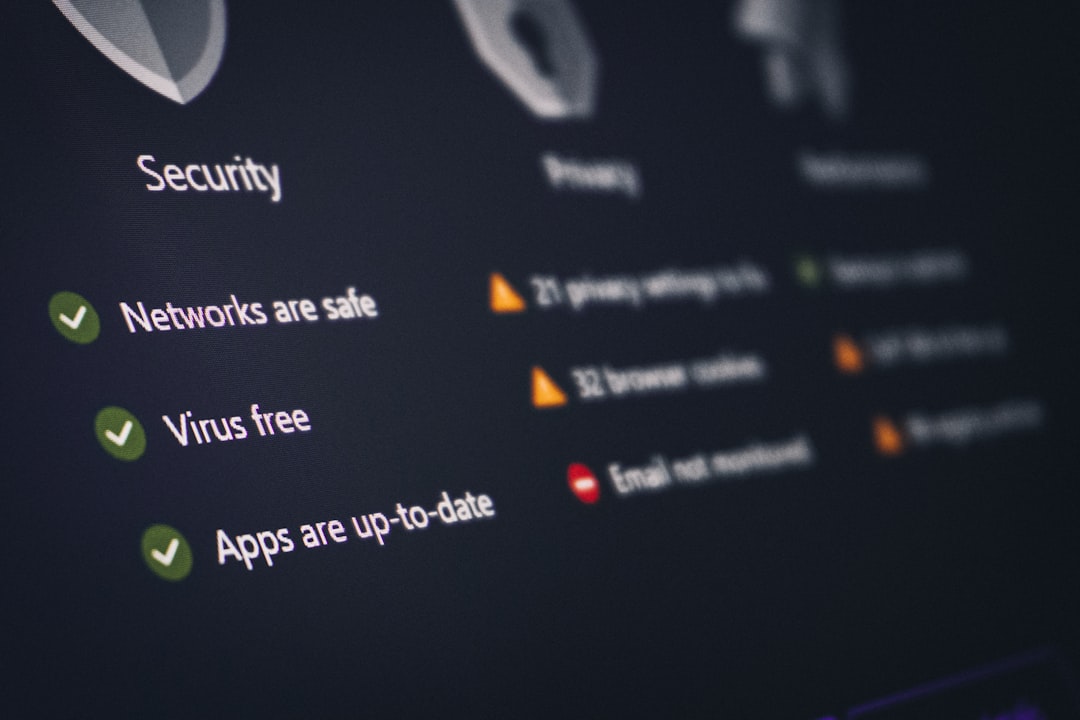

Security and Governance Improvements

Responsible deployment requires attention to safety and governance. Gemma 4 incorporates tighter safeguards and improved moderation alignment.

Updates in this area include:

- More robust content filtering

- Clearer refusal behavior in unsafe scenarios

- Improved monitoring compatibility

- Stronger alignment with policy guardrails

For enterprise adoption, governance matters as much as performance. Gemma 4’s strengthened oversight framework makes it more suitable for regulated industries where compliance cannot be optional.

Why These Changes Matter

The broader significance of Gemma 4 lies in what it represents for the open-model ecosystem. Historically, smaller and more efficient models required trade-offs in depth and reliability. Gemma 4 narrows that gap.

It matters because:

- Organizations gain competitive capability without extreme infrastructure cost

- Developers can deploy advanced AI locally or privately

- Reduced hallucination improves trust in professional settings

- Multimodal functionality expands real-world applicability

In short, Gemma 4 strengthens the case that compact, optimized models can rival far larger systems in meaningful use cases.

The Strategic Direction Forward

Gemma 4 signals a shift from rapid iteration toward refinement and stability. Rather than emphasizing parameter expansion alone, the focus appears centered on dependability, customization, and measured capability growth.

For businesses making long-term AI infrastructure decisions, this stability is critical. Systems must not simply demonstrate strong benchmark performance; they must remain consistent, controllable, and adaptable.

Gemma 4 reflects a deliberate move in that direction. Its improvements in reasoning integrity, context stability, efficiency, and deployment readiness combine to form a model that is not only more powerful—but more practical.

Conclusion

Gemma 4 represents a substantial evolution over previous versions. By strengthening architecture, expanding context handling, refining reasoning capability, enhancing multimodal performance, and improving fine-tuning efficiency, it delivers meaningful progress rather than superficial iteration.

Most importantly, these changes translate into tangible real-world benefits: lower operational costs, greater deployment flexibility, improved reliability, and expanded application scope. For developers, enterprises, and researchers evaluating advanced yet efficient AI models, Gemma 4 stands as a serious and strategically significant advancement.

The evolution from earlier Gemma versions to Gemma 4 is not simply about performance metrics—it is about maturity. And in a rapidly advancing AI landscape, maturity is what ultimately determines lasting impact.